PyTorch-Ignite

Training and evaluating neural networks flexibly and transparently

Victor, Sylvain & Taras

Slides: https://vfdev-5.github.io/ptcv21-pytorch-ignite-slides

Content

- PyTorch-Ignite: what and why?

- Quick-start example

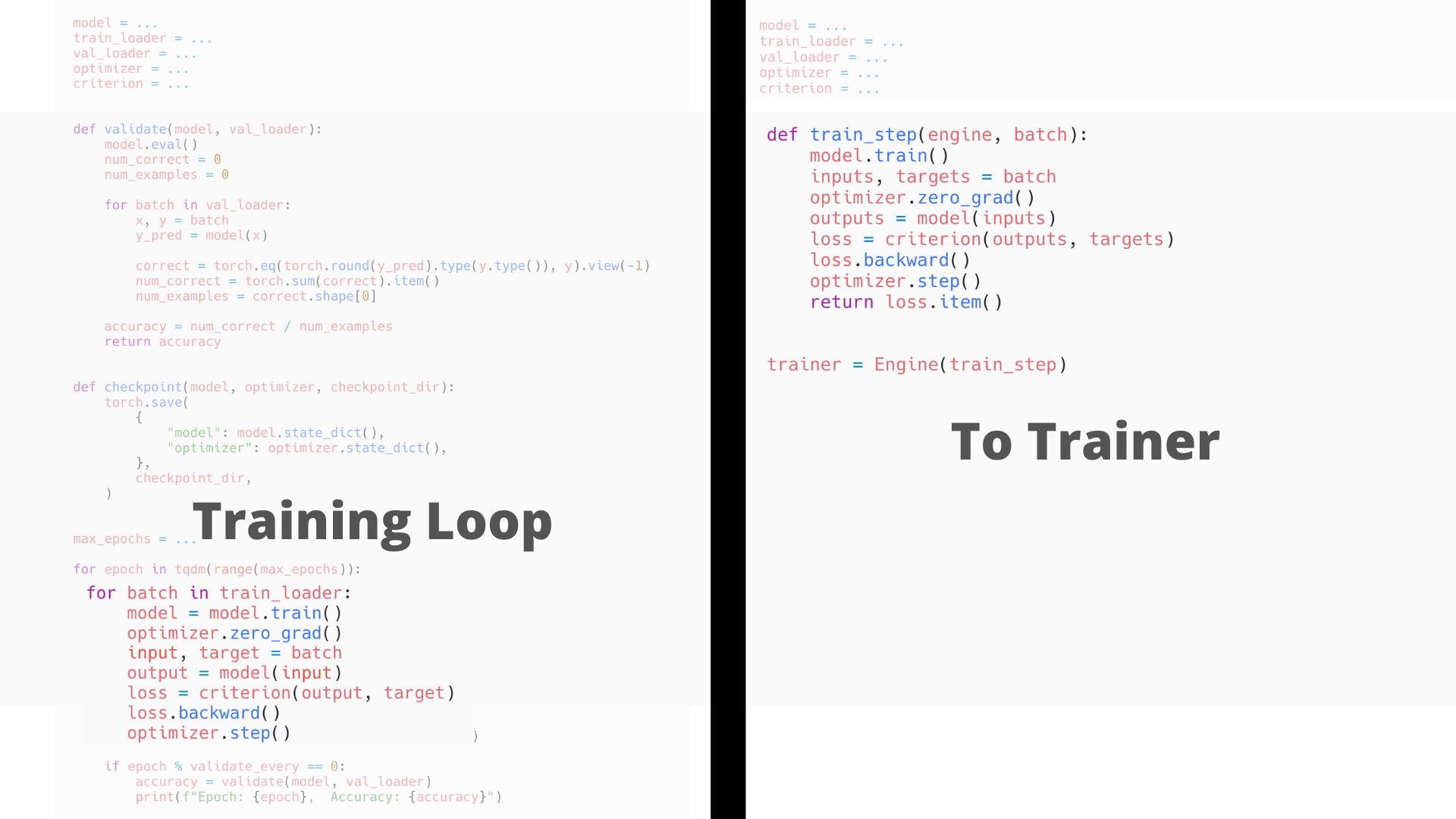

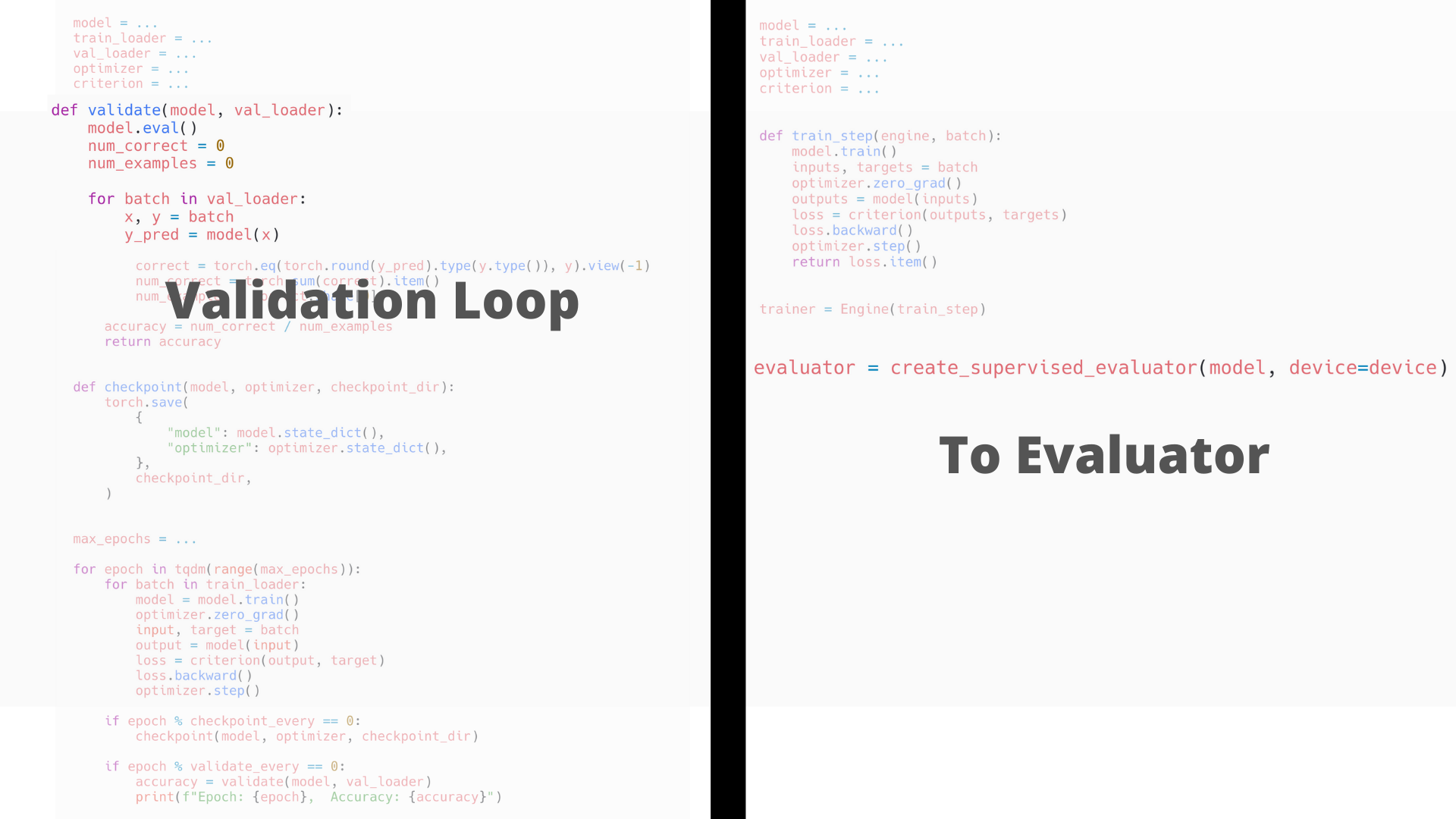

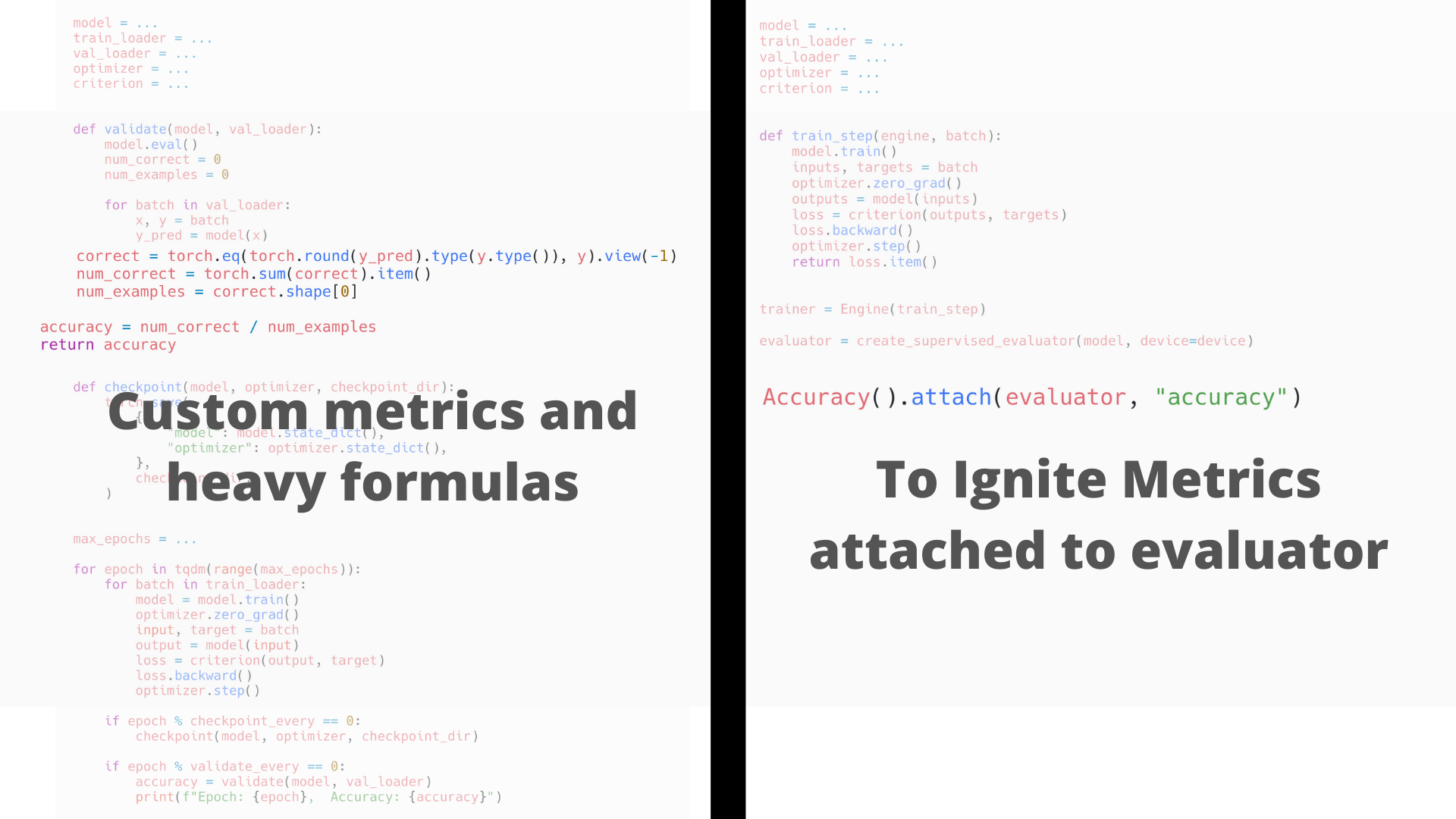

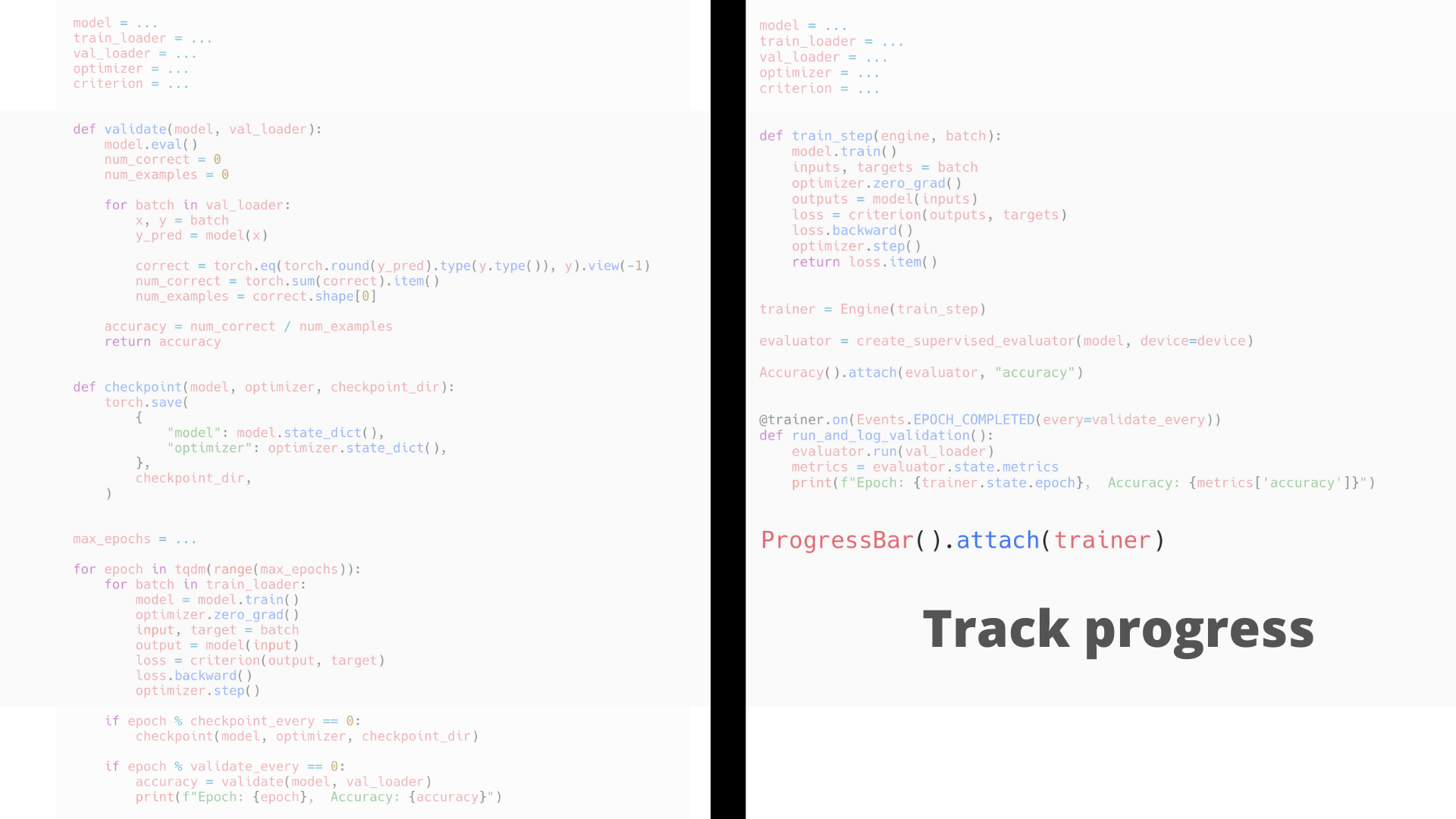

- Convert PyTorch to Ignite

- About the project

PyTorch-Ignite: what and why? 🤔

High-level library to help with training and evaluating neural networks in PyTorch flexibly and transparently.

| | |

What makes PyTorch-Ignite unique ?

- Composable and interoperable components

- Simple and understandable code

- Open-source community involvement

Alice: How it differs from other similar project?

Bob: See other PyTorch Community Voices editions :)

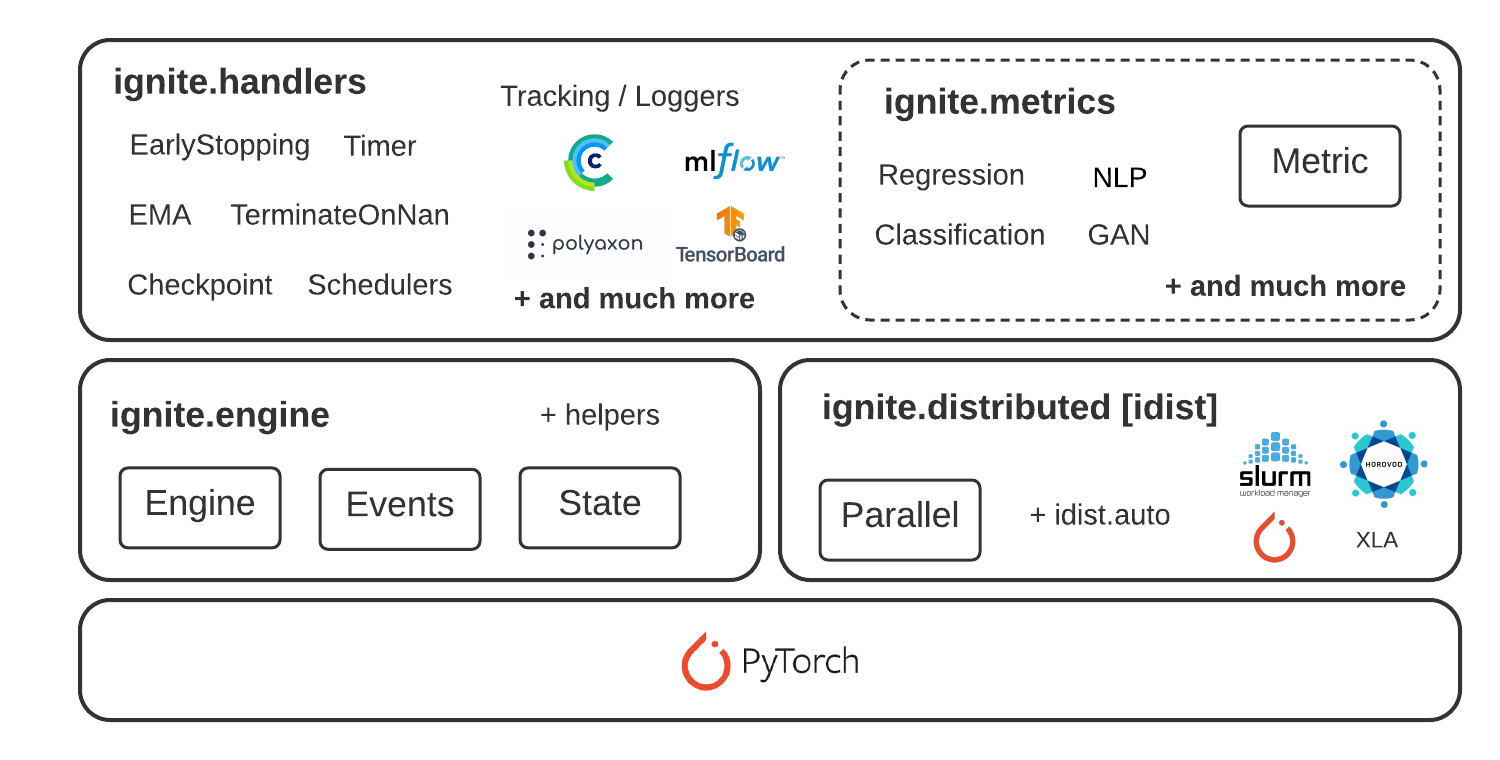

Key concepts in a nutshell

PyTorch-Ignite is about:

- Engine and Event System

- Out-of-the-box metrics to easily evaluate models

- Built-in handlers to compose training pipeline

- Distributed Training support

Engine and Event System

.

| In its simpliest form: |

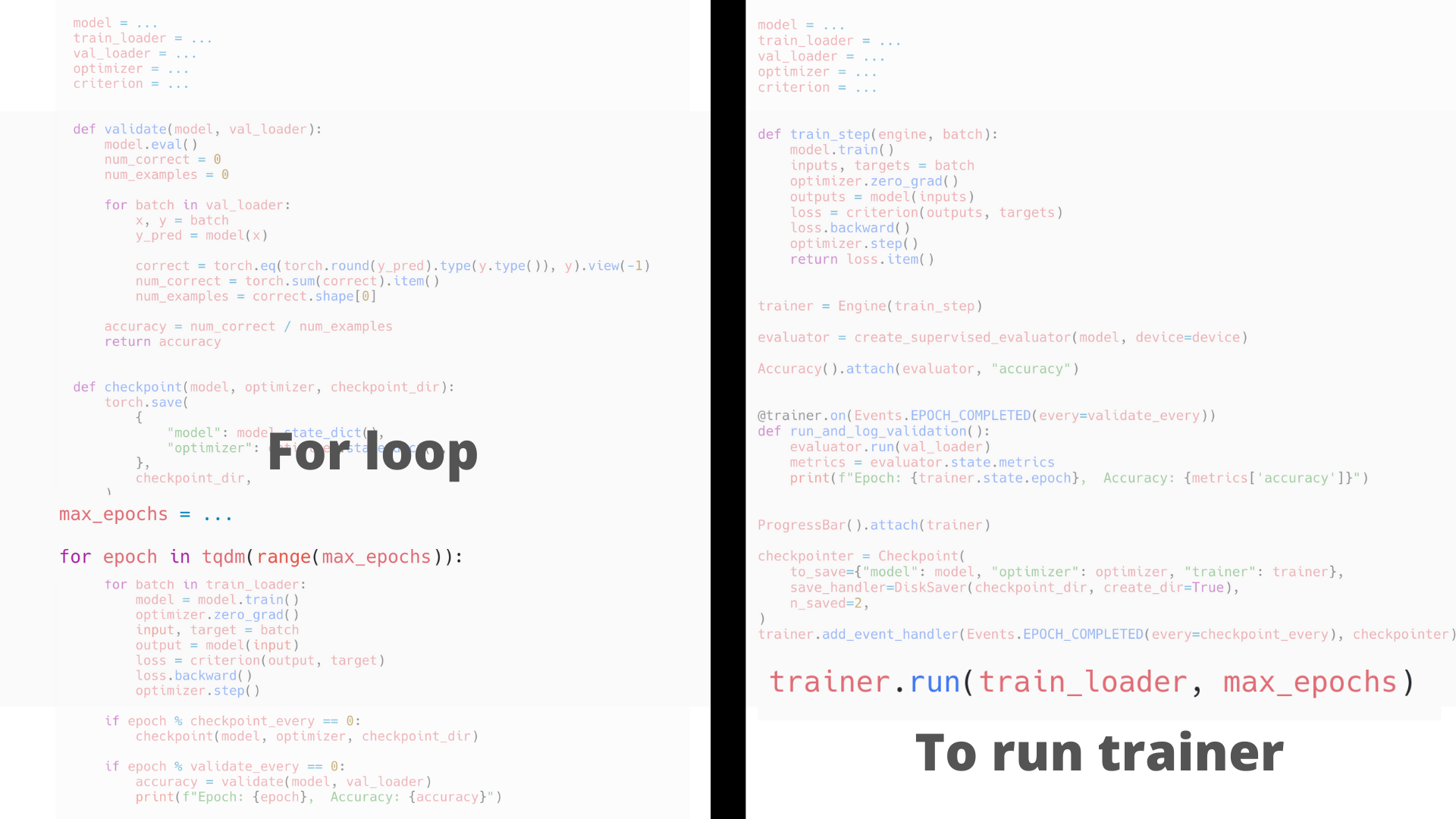

Simplified training and validation loop

No more coding for/while loops on epochs and iterations. Users instantiate engines and run them.

from ignite.engine import Engine, Events, create_supervised_evaluator

from ignite.metrics import Accuracy

# Setup training engine:

def train_step(engine, batch):

# Users can do whatever they need on a single iteration

# Eg. forward/backward pass for any number of models, optimizers, etc.

# ...

trainer = Engine(train_step)

# Setup single model evaluation engine

evaluator = create_supervised_evaluator(model, metrics={"accuracy": Accuracy()})

def validation():

state = evaluator.run(validation_data_loader)

# print computed metrics

print(trainer.state.epoch, state.metrics)

# Run model's validation at the end of each epoch

trainer.add_event_handler(Events.EPOCH_COMPLETED, validation)

# Start the training

trainer.run(training_data_loader, max_epochs=100)

Power of Events & Handlers 🚀

1. Execute any number of functions whenever you wish

Handlers can be any function: e.g. lambda, simple function, class method, etc.

trainer.add_event_handler(Events.STARTED, lambda _: print("Start training"))

# attach handler with args, kwargs

mydata = [1, 2, 3, 4]

logger = ...

def on_training_ended(data):

print(f"Training is ended. mydata={data}")

# User can use variables from another scope

logger.info("Training is ended")

trainer.add_event_handler(Events.COMPLETED, on_training_ended, mydata)

# call any number of functions on a single event

trainer.add_event_handler(Events.COMPLETED, lambda engine: print(engine.state.times))

@trainer.on(Events.ITERATION_COMPLETED)

def log_something(engine):

print(engine.state.output)

Power of Events & Handlers

2. Built-in events filtering and stacking

# run the validation every 5 epochs

@trainer.on(Events.EPOCH_COMPLETED(every=5))

def run_validation():

# run validation

@trainer.on(Events.COMPLETED | Events.EPOCH_COMPLETED(every=10))

def run_another_validation():

# ...

# change some training variable once on 20th epoch

@trainer.on(Events.EPOCH_STARTED(once=20))

def change_training_variable():

# ...

# Trigger handler with customly defined frequency

@trainer.on(Events.ITERATION_COMPLETED(event_filter=first_x_iters))

def log_gradients():

# ...

Power of Events & Handlers

3. Custom events to go beyond standard events

from ignite.engine import EventEnum

# Define custom events

class BackpropEvents(EventEnum):

BACKWARD_STARTED = 'backward_started'

BACKWARD_COMPLETED = 'backward_completed'

OPTIM_STEP_COMPLETED = 'optim_step_completed'

def train_step(engine, batch):

# ...

loss = criterion(y_pred, y)

engine.fire_event(BackpropEvents.BACKWARD_STARTED)

loss.backward()

engine.fire_event(BackpropEvents.BACKWARD_COMPLETED)

optimizer.step()

engine.fire_event(BackpropEvents.OPTIM_STEP_COMPLETED)

# ...

trainer = Engine(train_step)

trainer.register_events(*BackpropEvents)

@trainer.on(BackpropEvents.BACKWARD_STARTED)

def function_before_backprop(engine):

# ...

Out-of-the-box metrics 📈

50+ distributed ready out-of-the-box metrics to easily evaluate models.

- Dedicated to many Deep Learning tasks

- Easily composable to assemble a custom metric

- Easily extendable to create custom metrics

precision = Precision(average=False)

recall = Recall(average=False)

F1_per_class = (precision * recall * 2 / (precision + recall))

F1_mean = F1_per_class.mean() # torch mean method

F1_mean.attach(engine, "F1")

Built-in Handlers

.

| |

Distributed Training support

Run the same code across all supported backends seamlessly

- Backends from native torch distributed configuration:

nccl,gloo,mpi - Horovod framework with

glooorncclcommunication backend - XLA on TPUs via

pytorch/xla

import ignite.distributed as idist

def training(local_rank, *args, **kwargs):

dataloder_train = idist.auto_dataloder(dataset, ...)

model = ...

model = idist.auto_model(model)

optimizer = ...

optimizer = idist.auto_optimizer(optimizer)

backend = 'nccl' # or 'gloo', 'horovod', 'xla-tpu' or None

with idist.Parallel(backend) as parallel:

parallel.run(training)

Distributed Training support

Distributed launchers

Handle distributed launchers with the same code

torch.multiprocessing.spawntorch.distributed.launchhorovodrunslurm

Distributed Training support

Unified Distributed API

High-level helper methods

idist.auto_model()idist.auto_optim()idist.auto_dataloader()

Collective operations

all_reduce,all_gather, and more

The Big Picture

Quick-start example 👩💻👨💻

Let’s train a MNIST classifier with PyTorch-Ignite!

Installation

With pip:

$ pip install pytorch-ignite

or with conda:

$ conda install ignite -c pytorch

Import, import, import…

import torch

from torch import nn

from torch.utils.data import DataLoader

from torchvision.datasets import MNIST

from torchvision.models import resnet18

from torchvision.transforms import Compose, Normalize, ToTensor

from ignite.engine import Events, create_supervised_trainer, create_supervised_evaluator

from ignite.metrics import Accuracy, Loss

from ignite.handlers import ModelCheckpoint

from ignite.contrib.handlers import TensorboardLogger

Start with a PyTorch code

data_transform = Compose([ToTensor(), Normalize((0.1307,), (0.3081,))])

train_dataset = MNIST(download=True, root=".", transform=data_transform, train=True)

val_dataset = MNIST(download=True, root=".", transform=data_transform, train=False)

train_loader = DataLoader(train_dataset, batch_size=128, shuffle=True)

val_loader = DataLoader(val_dataset, batch_size=256, shuffle=False)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.model = resnet18(num_classes=10)

self.model.conv1 = nn.Conv2d(1, 64, kernel_size=3, padding=1, bias=False)

def forward(self, x):

return self.model(x)

device = "cuda"

model = Net().to(device)

optimizer = torch.optim.RMSprop(model.parameters(), lr=0.005)

criterion = nn.CrossEntropyLoss()

Here goes PyTorch-Ignite!

trainer = create_supervised_trainer(model, optimizer, criterion, device)

val_metrics = {

"accuracy": Accuracy(),

"loss": Loss(criterion)

}

evaluator = create_supervised_evaluator(model, metrics=val_metrics, device=device)

trainerengine to train the modelevaluatorengine to compute metrics on validation set + save the best models

Add handlers for logging the progress

@trainer.on(Events.ITERATION_COMPLETED(every=100))

def log_training_loss(engine):

print(f"Epoch[{engine.state.epoch}], Iter[{engine.state.iteration}] Loss: {engine.state.output:.2f}")

@trainer.on(Events.EPOCH_COMPLETED)

def log_validation_results(trainer):

evaluator.run(val_loader)

metrics = evaluator.state.metrics

print(f"Validation Results - Epoch[{trainer.state.epoch}] "

f"Avg accuracy: {metrics['accuracy']:.2f} "

f"Avg loss: {metrics['loss']:.2f}")

Add ModelCheckpoint handler with accuracy as a score function

model_checkpoint = ModelCheckpoint(

"checkpoint",

n_saved=2,

filename_prefix="best",

score_function=lambda e: e.state.metrics["accuracy"],

score_name="accuracy",

)

evaluator.add_event_handler(Events.COMPLETED, model_checkpoint, {"model": model})

Add Tensorboard Logger

tb_logger = TensorboardLogger(log_dir="tb-logger")

tb_logger.attach_output_handler(

trainer,

event_name=Events.ITERATION_COMPLETED(every=100),

tag="training",

output_transform=lambda loss: {"batch_loss": loss},

)

tb_logger.attach_output_handler(

evaluator,

event_name=Events.EPOCH_COMPLETED,

tag="validation",

metric_names="all",

global_step_transform=global_step_from_engine(trainer)

)

🚀Liftoff!🚀

trainer.run(train_loader, max_epochs=5)

Epoch[1], Iter[100] Loss: 0.19

Epoch[1], Iter[200] Loss: 0.13

Epoch[1], Iter[300] Loss: 0.08

Epoch[1], Iter[400] Loss: 0.11

Training Results - Epoch[1] Avg accuracy: 0.97 Avg loss: 0.09

Validation Results - Epoch[1] Avg accuracy: 0.97 Avg loss: 0.08

...

Epoch[5], Iter[1900] Loss: 0.02

Epoch[5], Iter[2000] Loss: 0.11

Epoch[5], Iter[2100] Loss: 0.05

Epoch[5], Iter[2200] Loss: 0.02

Epoch[5], Iter[2300] Loss: 0.01

Training Results - Epoch[5] Avg accuracy: 0.99 Avg loss: 0.02

Validation Results - Epoch[5] Avg accuracy: 0.99 Avg loss: 0.03

Complete code

PyTorch-Ignite Code-Generator

https://code-generator.pytorch-ignite.ai/

What is Code-Generator?: web app to quickly produce quick-start python code for common training tasks in deep learning.

Why to use Code-Generator?: start working on a task without rewriting everything from scratch.

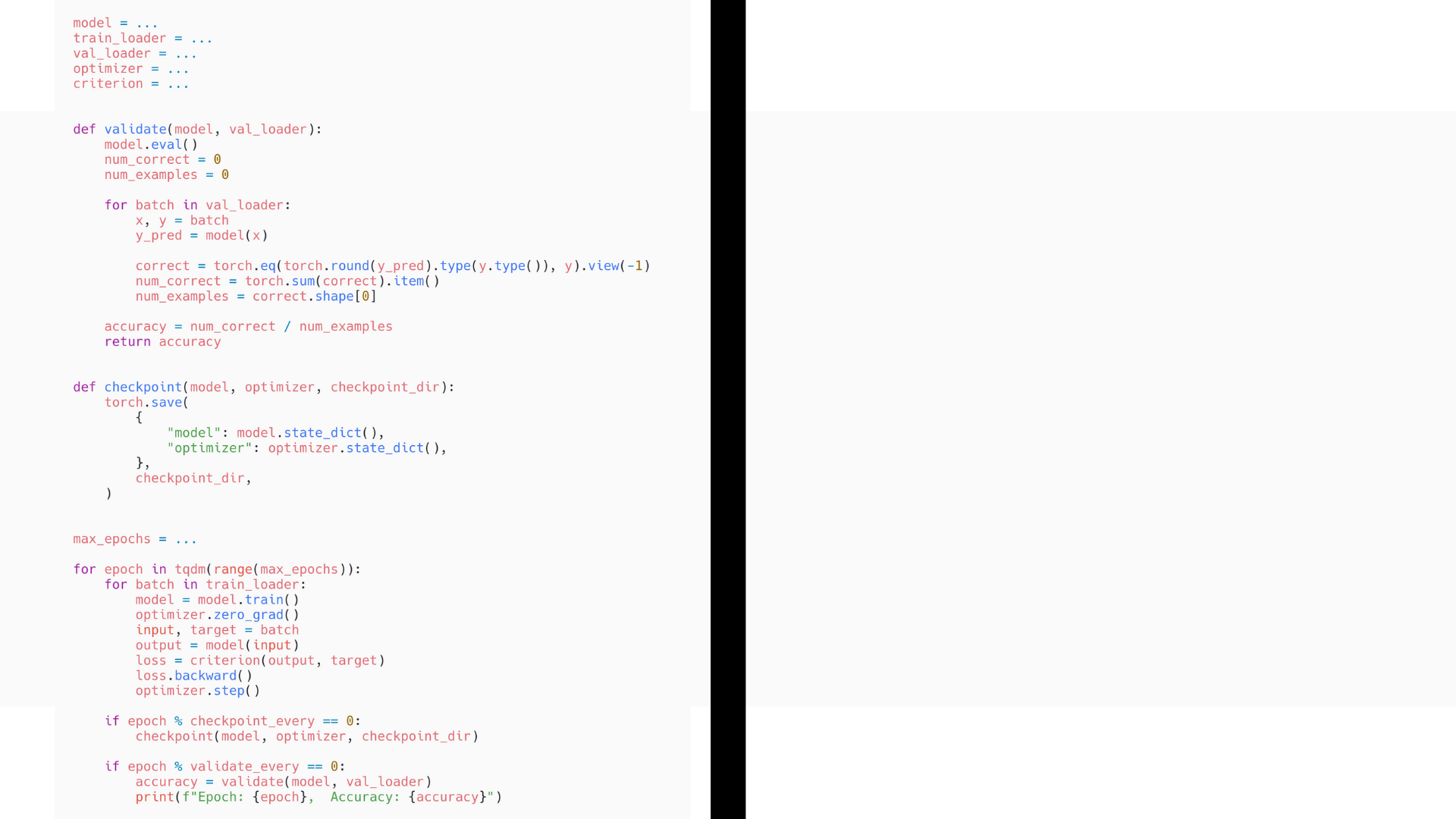

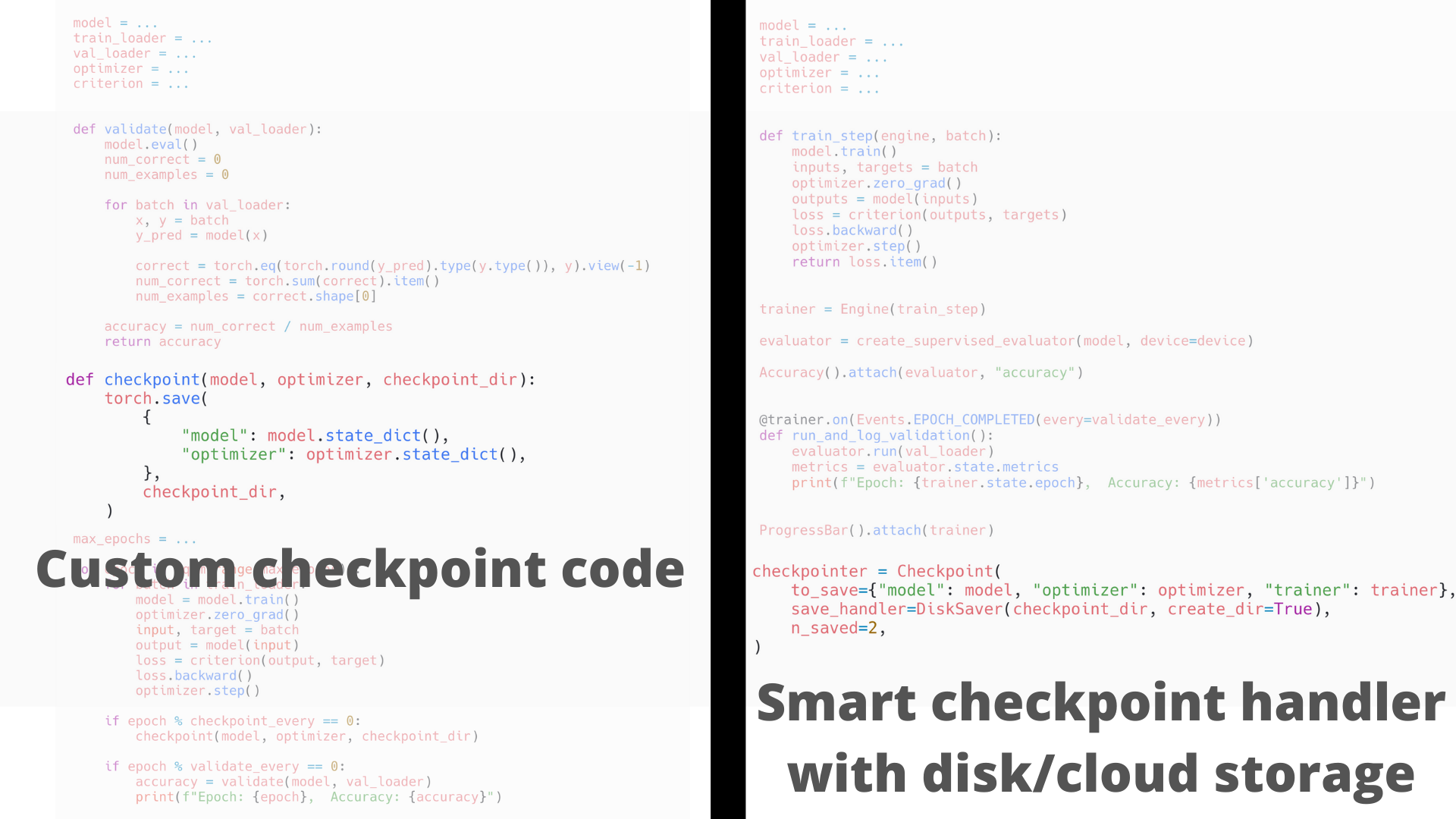

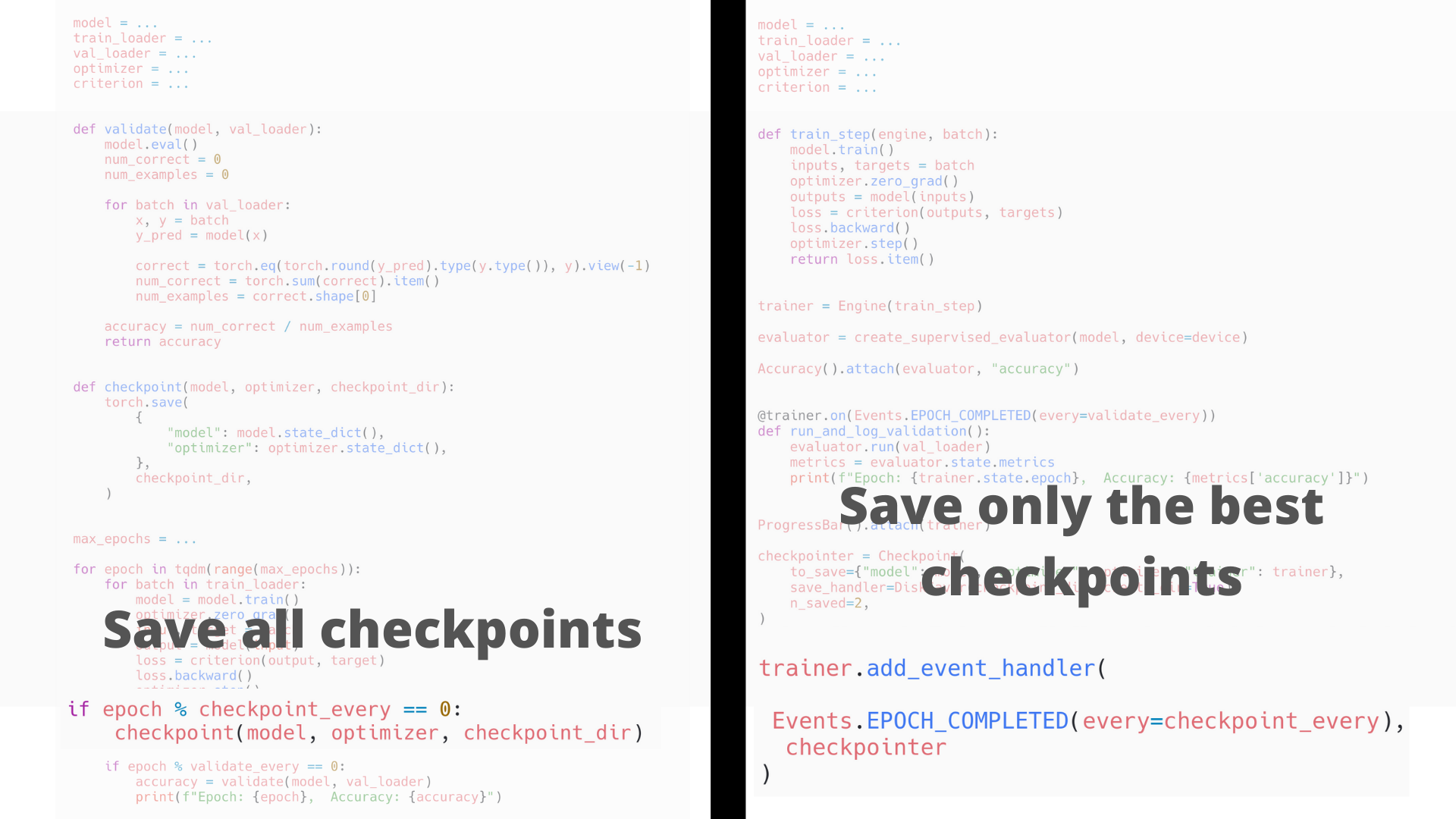

🔥 Convert PyTorch to Ignite ❤️🔥

How to translate pure PyTorch code to PyTorch+Ignite

About “PyTorch-Ignite” project

Community-driven open source and NumFOCUS Affiliated Project

maintained by volunteers in the PyTorch community:

@vfdev-5, @ydcjeff, @KickItLikeShika, @sdesrozis, @alykhantejani, @anmolsjoshi,

@trsvchn, @Moh-Yakoub, ..., @fco-dv, @gucifer, @Priyansi, ...

With the support of:

Projects using PyTorch-Ignite

- Research Papers

- Blog articles, tutorials, books

- Toolkits

- Project MONAI, Nussl, …

More details here: https://pytorch-ignite.ai/ecosystem/

Community Engagement

Google Summer of Code 2021

- Mentored two great students (Ahmed and Arpan)

Google Season of Docs 2021

- Working with great tech writer (Priyansi)

Hacktoberfest 2020 and coming up 2021

PyData Global Mentored Sprint 2020

Our new website development (thanks to Jeff Yang!)

Stay tuned for upcoming events …

Join the PyTorch-Ignite Community

We are looking for motivated contributors to help out with the project.

Everyone is welcome to contribute

Everyone is welcome to contribute

How to start:

- Read our Contributing guides

- Pick List of Help-wanted GH issue

- Reach out to us on GH or Discord for more guidance

Thanks for watchingand listening !Questions? 🙋👩💻🙋👨💻👩💻 | Follow us on and check out our new website: |